Hi everybody,

Here is the reproducible:

import ROOT

import sys

ROOT.gInterpreter.Declare('''

#include "Math/Point3D.h"

#include "Math/Vector3D.h"

#include <random>

using namespace ROOT::Math;

using namespace ROOT::VecOps;

using std::cout, std::endl, std::stringstream, std::vector, std::string, std::runtime_error;

#define SPEED_OF_LIGHT 299.792458

RVec <double> dToImpact(const RVec<XYZVector>& hits, const XYZVector& rImpact){

auto getDistance = [&](const XYZVector& hit) { return (hit - rImpact).R(); };

return Map(hits, getDistance);

}

RVec <double> dToLine(const RVec <XYZVector>& hits, const XYZVector& vtx, const XYZVector& p){

RVec <double> distance;

for (const auto& hit:hits){

double d = (hit - vtx).Cross(p.Unit()).R();

distance.push_back(d);

}

return distance;

}

RVec <bool> selectHits(const RVec<double>& dToLine, const RVec<int>& layer_hit, bool only_closest=true, int n_layers=10, double cyl_cut=9999.){

int nHits = dToLine.size();

RVec <bool> selected(nHits);

if(!only_closest){

for (int i=0; i < nHits; ++i)

if (dToLine[i] < cyl_cut && layer_hit[i] < n_layers)

selected[i] = true;

return selected;

}

for (int layer=0; layer<n_layers; ++layer){

map<int, double> layer_hits;

for (int i=0; i < nHits; ++i)

if(dToLine[i] < cyl_cut && layer_hit[i] == layer)

layer_hits[i] = dToLine[i];

int min_idx =(*min_element(layer_hits.begin(), layer_hits.end(),

[](const auto& l, const auto& r) { return l.second < r.second; }) ).first ;

selected[min_idx] = true;

}

return selected;

}

double fitFunc(const RVec <double>& x, const RVec<double>& y, const int par=0){

if(x.size() <= 1) return 0.;

TGraphErrors gr(x.size(), &x[0], &y[0]);

TF1* fit = new TF1("fit", "pol1");

gr.Fit(fit);

fit = gr.GetFunction("fit");

if (par == 3) return 1./fit->GetParameter(1);

return fit->GetParameter(par);

}

''')

ch = ROOT.TChain("test")

# # 2f_Z_hadronic data

ch.Add("./test.root")

df = ROOT.RDataFrame(ch)

df = df.Filter("nECALHits > 0 && abs(xyzCluster.Z()) < 2200.")

df = df.Define("dToImpact", "dToImpact(xyzECALHit, xyzTrackAtCalo)")\

.Define("dToLine", "dToLine(xyzECALHit, xyzTrackAtCalo, pTrackAtCalo)")\

.Define("sel_frank", "selectHits(dToLine, layerECALHit, true, 10, 9999.)")\

.Define("tof0", "fitFunc(dToImpact[sel_frank], tECALHit[sel_frank], 0)")\

.Define("tof0_inv", "1./fitFunc(dToImpact[sel_frank], tECALHit[sel_frank], 0)")\

.Define("slope0", "fitFunc(dToImpact[sel_frank], tECALHit[sel_frank], 1)")\

.Define("slope_inv", "1./fitFunc(dToImpact[sel_frank], tECALHit[sel_frank], 1)")\

.Define("slope_inv_expected", "fitFunc(dToImpact[sel_frank], tECALHit[sel_frank], 3)")

df.Snapshot("new_tree", "./new_test.root", ["tof0", "tof0_inv", "slope0", "slope_inv", "slope_inv_expected"])

Here is the root file:

test.root (104.6 KB)

What you shall see:

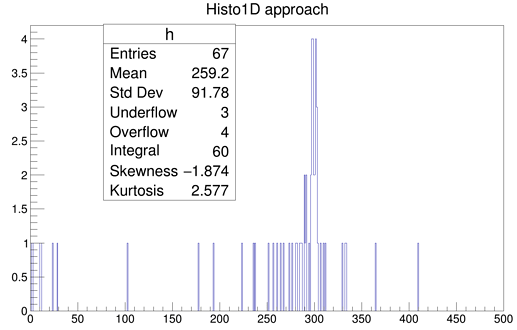

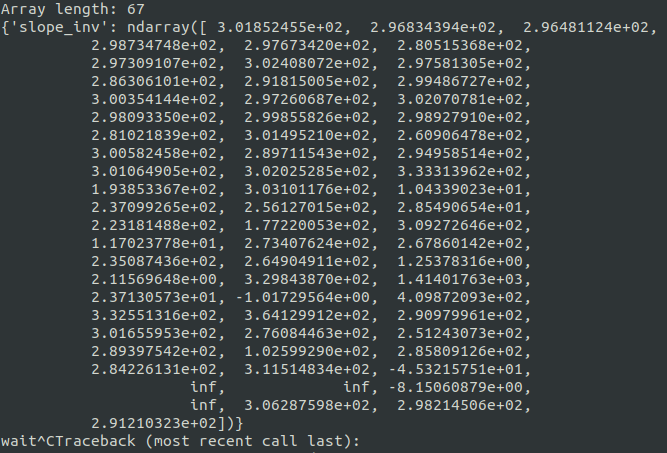

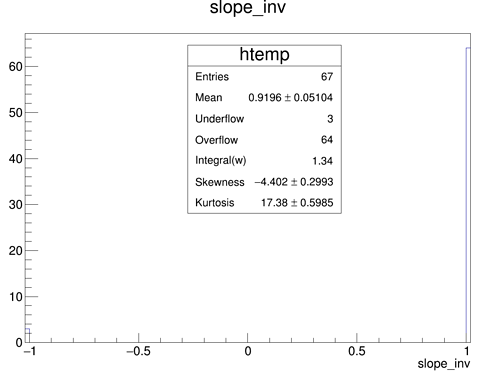

slope_inv leaf in the new root file snapshot is empty! (extending draw limits show all values at 1.)

What is expected:

Some values around ~300 region. Because you can see from slope0 and fit std output fit values are fine.

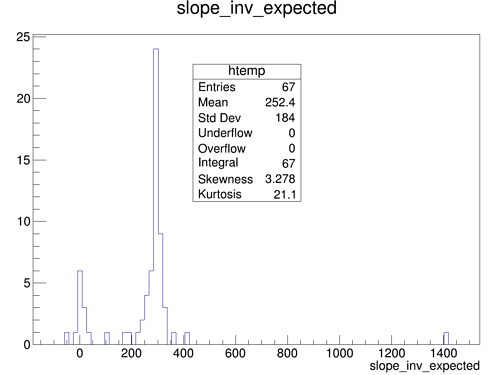

Also, if I make fitFunc() to return inverse parameter in advance, it works! see slope_inv_expected…

What is also puzzling that for 0st parameter everything works fine…

Sorry, I couldn’t make more simple reproducible.

Why this exact peace of code breaks???

Please help… So frustrating

…

…

ROOT Version: 6.22/00

Python: 3.7.6

, BUT it shows few events in under/overflow bins

, BUT it shows few events in under/overflow bins