I’ve been adapting the RooStats Feldman-Cousins method documented here by using this tutorial

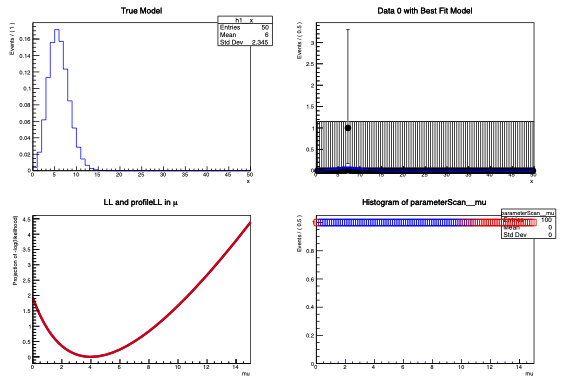

I’ve been using a simplified method to just understand how it works in Root. I generate a simple 1 event dataset off of a model, and then test it with the model.

Example.C (4.3 KB)

Data_0.root (5.4 KB)

My question is this: if I follow blindly in the tutorial, the tutorial claims to be reproducing the FC neutrino oscillation result by measuring the 90% C.L. I had assumed this was from the line

fc.SetTestSize(.1);

which the documentation specifies “set the size of the test (rate of Type I error) ( Eg. 0.05 for a 95% Confidence Interval)”. But. I notice that there’s also function to set the confidence level explicitly with alot of the same wording as setting the test size.

The example I have has two function FC_Test1() and FC_Test2(). The only difference between them is that FC_Test2() explicitly sets the confidence level via

fc.SetConfidenceLevel(.95);

Test 1 yields a bound of [0.375, 10.875] and Test 2 yields a bound of [0.075, 10.575]. If I comment out the SetTestSize on Test 2 I recover the bounds of [0.375, 10.875]. Is it inappropriate to set it both ways? If so, why?