Hello TMVA experts,

I am trying to use the BDTG standalone c++ class and having some trouble with it.

The BDTG response is completely different when I compare it from the TMVAGUI and the autogenerated C++ class implemented in a homemade program

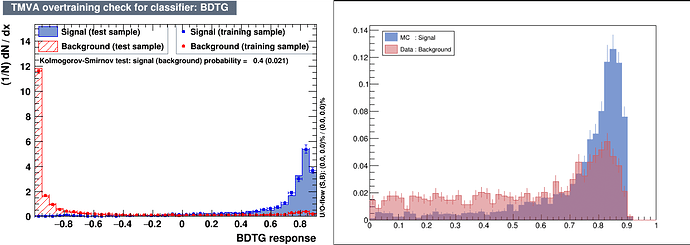

Here, the TMVAGUI output on the left and the c++ class output on the right :

I don’t understand the differences even so the input events are exactly the same in TMVA and my homemade program, I also tried with a Reader but I get the same result.

To investigate this, I have tried the same procedure with MLPBNN and it works fine.

Maybe is there differences in the usage of the BDTG class compared with MLPBNN one ?

Thank you for your help.

Cheers,

Maxime

Hi,

Just to clarify; Is the output consistent between the TMVA::Reader and the stand-alone c++ class?

This would hint at a mismatch between the setups because the output when running TrainAllMethods and TestAllMethods is actually generated in a way very similar to how the Reader does it (recreating the method from the xml-file).

Notice that your plot does not include negative outputs. Could you regenerate your plot using the range -1 to 1?

Also notice that the TMVA graph output is normalised so that the histogram sums to 1 for (i.e signal is normalised to sum to 1, and background is normalised to sum to 1.).

Cheers,

Kim

Hi,

Thank you very much, you pointed out my silly mistake, indeed my neural net output range is [0,1] but the BDT is in the range [-1,1].

Now that I have normalized in the good range these output are consistent.

cheers,

Maxime

Great Maxime!

Please mark the topic as solved if indeed it is, and good luck with your machine learning

Cheers,

Kim