ROOT Version: 6.16

Platform: lxplus

Dear experts,

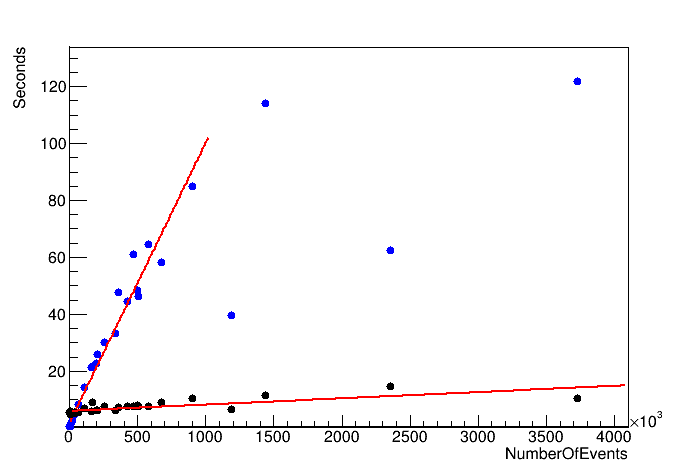

I’ve looked for ways to speed up the looping over TTrees in PyROOT and RDataFrame seemed like the preferred way to do it. But when I’ve tried to implement it in my code the performance was either the same or only slightly better than for the pure python script.

I’ve attached the basic versions of the looping script written in C++, python with for event loop and python with RDataFrame and the example .root file. The approximate time it takes to loop over the tree is:

C++: 0.2 sec

Python (for event): 6.7 sec

Python (RDF, no MT): 5 sec

Python (RDF, implicit MT): 6.5 sec

Is there something I’m doing wrong in the RDataFrame script?

Thanks in advance.

ConvertTreeRDF.py (1.2 KB)

ConvertTree.py (1.3 KB)

ConvertTree.cpp (3.1 KB)

Input file (cernbox)