I have a experimental energy distribution

h_ffEcm[i] = new TH1F(h_name,h_title,1000,0,250);

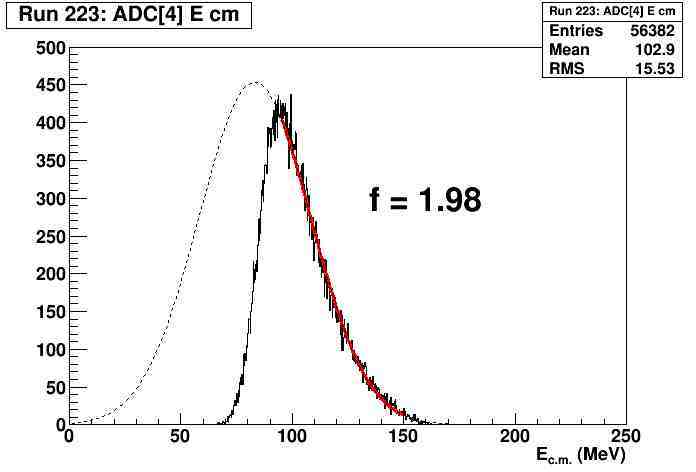

which has a high energy threshold; the lower energy part is cut off (see figure 1).

To estimate how much of the true distribution I’m missing I make a Gaussian fit

fit[i] = new TF1(f_name,"gaus",0,250);

using only the high energy part (red line)

h_ffEcm[i]->Fit(fit[i],"QB","",95,150);

then I integrate the experimental distribution (h_ffEcm[i]->Integrate()), then the Gaussian distribution (fit[i]->Integral(0,250)), then take the ratio, which becomes my correction factor, a number shown in the figure.

You may notice the factor is close to 2, when a trained eye would say it’s close to 0.5.

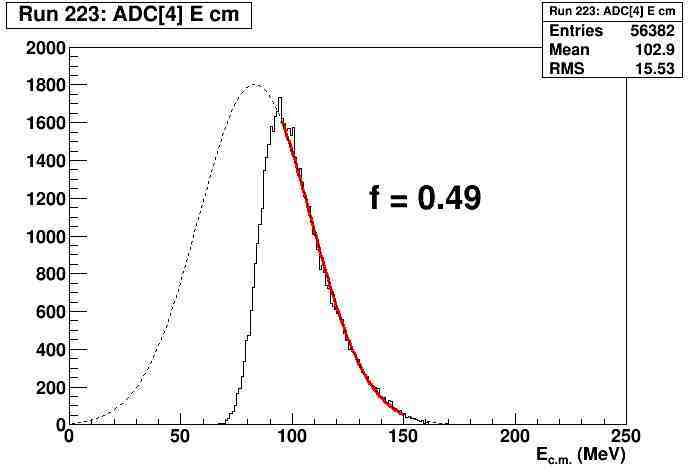

If I rebin the histogram

h_ffEcm[i] = new TH1F(h_name,h_title,250,0,250);

i.e. 1 MeV bins instead of 0.25 MeV bins, then the factor becomes 0.49 (figure 2), which is the correct factor.

To recap, if I use 0.25 MeV binning of the histogram, the integration of the fit function is 4 times smaller than it should, i.e. in terms of the TH1 constructor the integration is excactly xbins/nbins smaller.

Regardless of binning, h_ffEcm[i]->Integrate()=56382 (also the number of entries).

If nbins=1000, xbins=250, fit[i]->Integral(0,250) = 28501.15.

If nbins=250, xbins=250, fit[i]->Integral(0,250) = .114161.82.

Can anybody explain this behavior, is it a bug or an artifact of mixing objects (TF1, TH1)?