Hi,

As the title suggests, I have recently come across an issue where the execution of a Garfield++ code does not run at 100% of CPU load. This problem causes the program to take longer to execute as well as wasting “resources” when run on a supercomputer. So, any suggestion on how I can alleviate this problem is greatly appreciated.

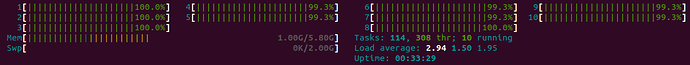

Previously, this is the typical running load of my code (display from htop):

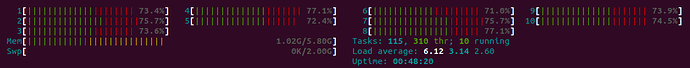

As one can see, all threads are utilized at 100%, and this is kept up throughout the execution. However, at this point, this is no longer the case. As I increase the number of electrodes to be calculated for signals, the running load becomes like this

So, the load for all threads just drops by ~30%. I was able to narrow down to this part of my code that causes this issue

double xe1, ye1, ze1, te1, e1;

double xe2, ye2, ze2, te2, e2;

double xi1, yi1, zi1, ti1;

double xi2, yi2, zi2, ti2;

int status;

int Aright = 0, Aleft = 0;

int count = index;

bool calculate_signal = true;

int max = omp_get_max_threads();

std::cout << "Maximum number of threads: " << max << std::endl;

omp_set_dynamic(0);

omp_set_num_threads(max);

#pragma omp parallel for

for (int k = 0; k < index; k++) {

AvalancheMicroscopic aval;

aval.SetSensor(&sensor);

aval.EnableSignalCalculation(calculate_signal);

aval.AvalancheElectron(electrons.at(k*4 + 0),electrons.at(k*4 + 1),electrons.at(k*4 + 2),electrons.at(k*4 + 3), 0.1, 0,0,0);

const int np = aval.GetNumberOfElectronEndpoints();

DriftLineRKF drift;

drift.SetSensor(&sensor);

drift.EnableSignalCalculation(calculate_signal);

for (int j = np; j--;) {

aval.GetElectronEndpoint(j, xe1, ye1, ze1, te1, e1,

xe2, ye2, ze2, te2, e2, status);

drift.DriftIon(xe1, ye1, ze1, te1);

}

}

Specifically, it is the second for loop (the inside for loop) that causes this issue (when I comment out the second for loop, all thread runs at ~100%). If anyone has come across this issue or knows how to fix it, please let me know.