Please read tips for efficient and successful posting and posting code

ROOT Version: 6.20/05

Platform: Ubuntu 16.04

Compiler: gcc 5.4

Dear experts,

I’m doing a least square fit with a custom class implementing double operator() (const double *par), called by fitter.FitFCN(npars, global_chi2, 0, datasize, true).

I’m having problems in understanding how the parameter errors are calculated.

-

If I compare the standard errors from Minuit2 (using the error matrix) with the errors from Minos, I found the same values.

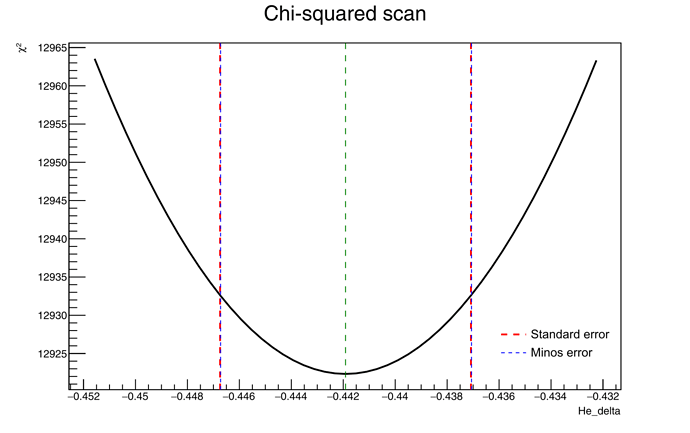

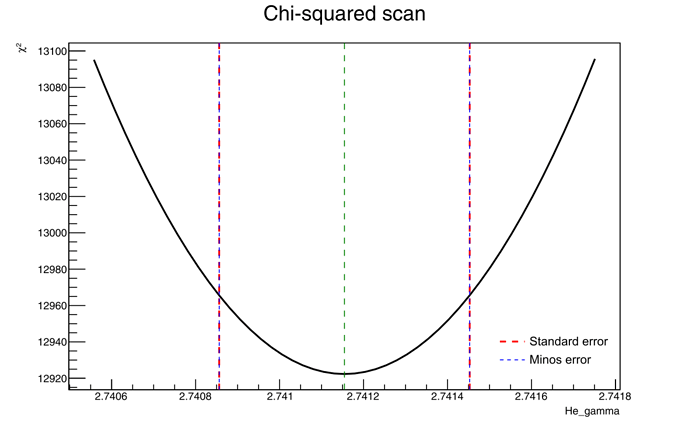

See the plots of the chi2 scan (obtained withfitter.Result().Scan) for two parameters. The red lines are the standard errors, while the blue lines are the Minos errors.

As you see, the red and blue lines are on top of each other for both parameters.

However, the intercepts with the chi2 curves do not make sense to me.

I set Minuit ErrorDef to 1, so I was expecting the errors from Minos to correspond to the parameter values for which chi2 = chi2_min + 1, but this is clearly not the case: for He_delta, the difference in chi2 is ~11, while for He_gamma is ~43.

Is there something I don’t understand? -

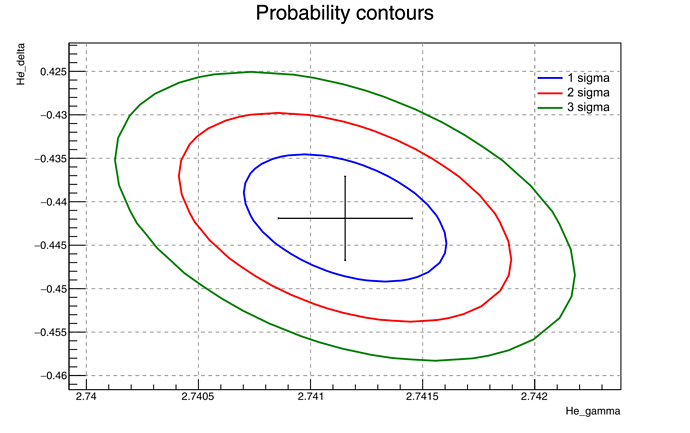

I compute the 2D contours for various sigma levels (using

SetErrorDef(TMath::ChisquareQuantile(TMath::Erf(s/TMath::Sqrt2()), 2)), s = 1, 2, or 3).

The marker at the center is the best fit value, with the error bars given by the standard Minuit errors (which are equal to Minos errors, as pointed above).

Again, I’m confused.

I thought that the 1 sigma Minos errors for one parameter would be equal to the leftmost and rightmost edge of the 2D 1 sigma contour, as written in The interpretation of errors - James, but as you can see it’s not the case.

Am I doing something wrong? -

Since my chi2/ndf is not 1 (it’s ~13, very bad, I know…), I used

fitter.FitConfig().SetNormErrors()to rescale the parameter errors by sqrt(chi2/ndf), but nothing changed.

Is this becauseFitFCNdoes not internally setfFitType = ROOT::Math::FitMethodFunction::kLeastSquare?

Is there a way to tellFitterthat I’m performing a least square fit?

Apparently, the last argument toFitFcn(chi2 = true) doesn’t do this. -

Related to the previous point: it looks like Minos errors are not rescaled by sqrt(chi2/ndf).

Can I simply multiply Minos errors by sqrt(chi2/ndf) or is this wrong? -

I can compute the confidence interval of the fitted model with

fitter.FitResults().GetConfidenceIntervals, asking to rescale the interval by sqrt(chi2/ndf), but in this case there is an additional term from the Student distribution, which should be used only when the measurement uncertainties are unknown.

However, I know the uncertainties of my data points, so in theory it would be more correct to just multiply the interval by sqrt(chi2/ndf), right?

Thanks.