Hi,

I found unexplained observation on my histogram. I am using rdataframe, to histogram an energy spectrum.

I wish to apply , say a scale factor for energy > 500 MeV, with scale factor 1.11.

i uses lambda function

auto applySF = [](double en, double sf) {

return (en>500) ? en*sf : en ;

};

and the implementation is such

double scale = 1.11;

rdf.Define( "scale" , to_string(scale) );

rdf = rdf.Define("Enprime", applySF , { "Energy", "scale" } );

auto histo = rdf.Histo1D( TH1DModel , "Enprime" );

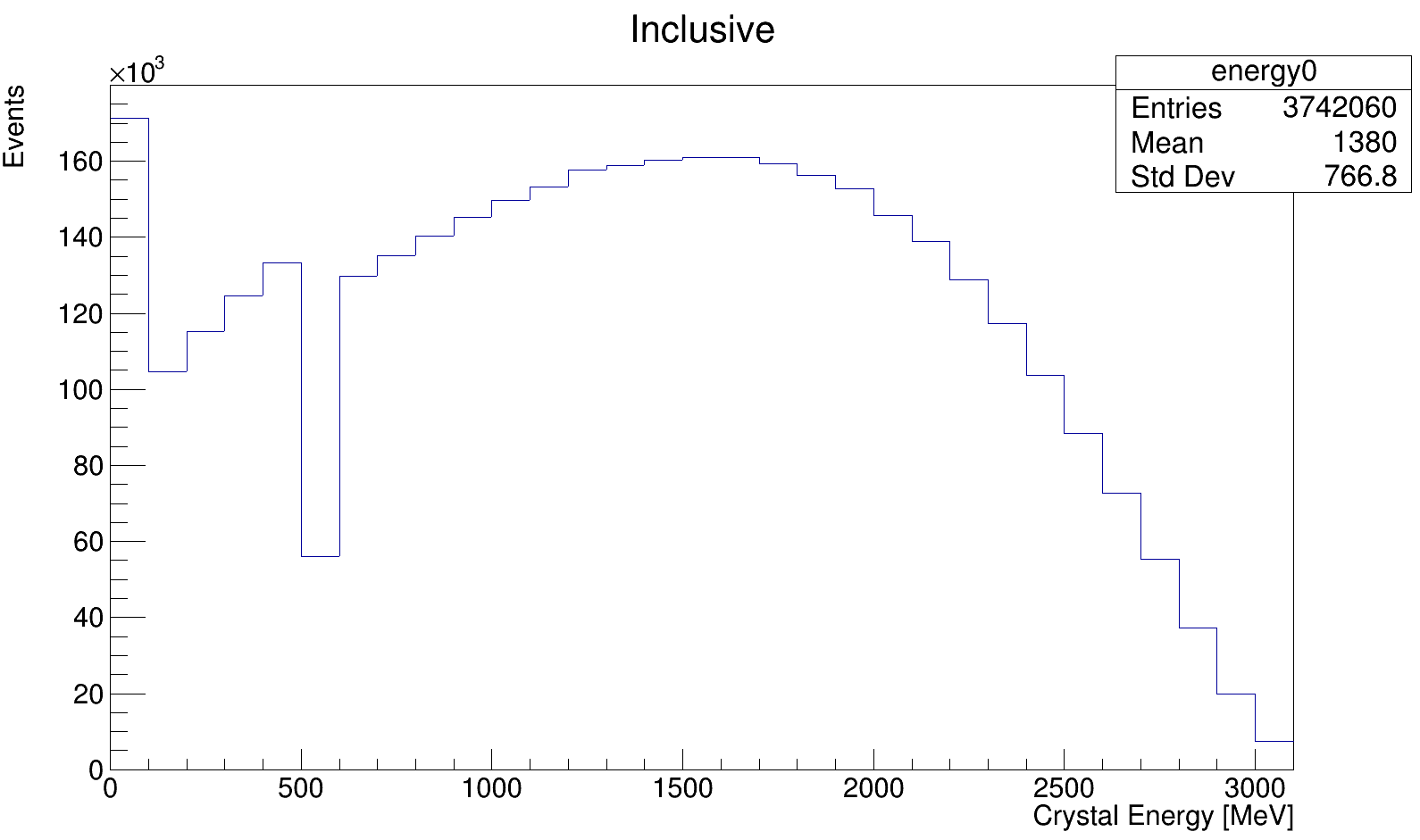

As a result, my histogram show a strange dim starting from first bin in 500 MeV, and it looks ok after (scale seem to be applied correctly):

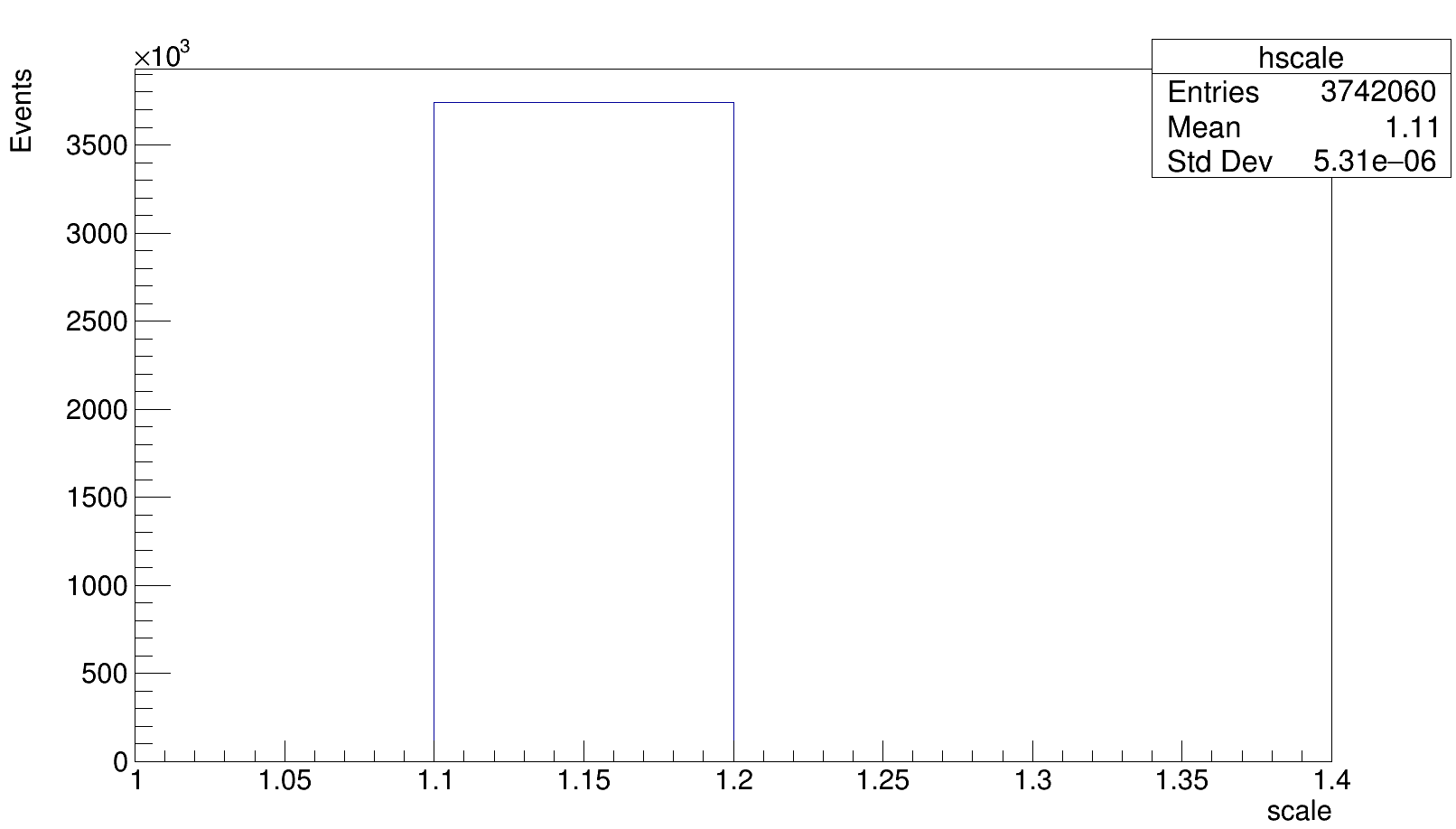

For cross check, these are the histogram for scale column :

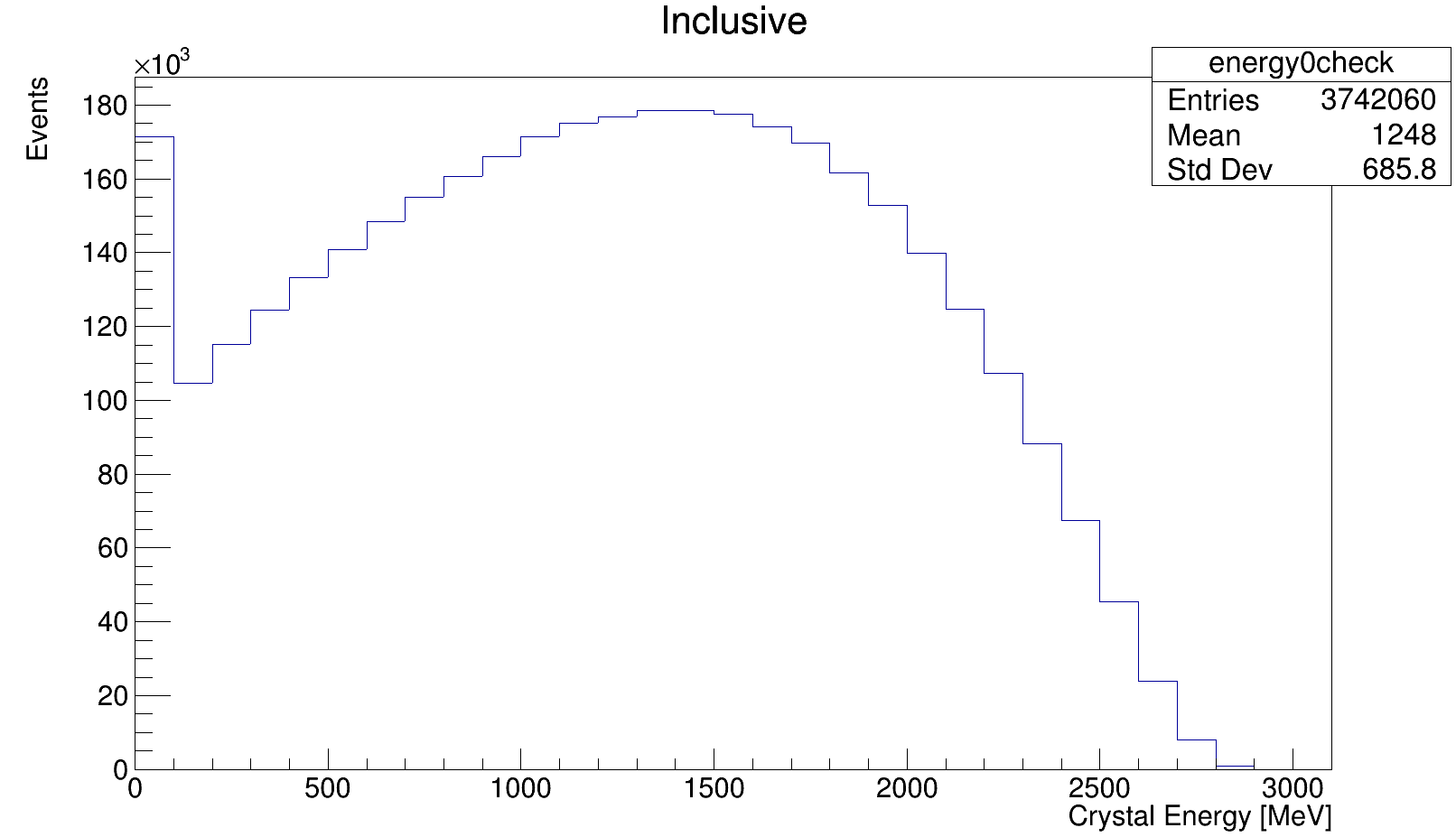

and this is the energy spectrum BEFORE applying conditional scale via lambda function:

May i know if this technical problem had happened to other user, or i am missing something fundamentally here…

Thanks!

ROOT Version: 6.26/08Platform: LinuxCompiler: shell root-config --cxx

Danilo

March 12, 2024, 7:50pm

2

Hi Siewyan,

What is happening is a bit weird since there is a string conversion in the middle, which is unnecessary. The correct code, if I understand the example (please correct me if I am wrong!) would be:

double scale = 1.11;

rdf.Define( "scale" , [&scale](){return scale;} );

rdf = rdf.Define("Enprime", applySF , { "Energy", "scale" } );

auto histo = rdf.Histo1D( TH1DModel , "Enprime" );

If you have one single scale factor:

const auto scale = 1.11;

auto applySF = [&scale](double en) {

return (en>500) ? en*scale : en ;

};

auto rdf = rdf.Define("Enprime", applySF , { "Energy" } );

auto histo = rdf.Histo1D( TH1DModel , "Enprime" );

I hope this helps!

Cheers,

Hi Danilo,

Thanks for the suggestion, however, both cases are not working.

I’ve tried the first method, it gave the same result.

the second method shows a cutoff, above en>500 show empty histogram…

Also, I’ve tried to disable multi-thread, it show the same result…

it seem a bit weird though…

Siewyan

Here is the mockup code for reproducing the issue

#include "ROOT/RDataFrame.hxx"

#include "ROOT/RDFHelpers.hxx"

#include "ROOT/RVec.hxx"

#include "ROOT/RSnapshotOptions.hxx"

using namespace ROOT::RDF;

int main() {

double scale = 1.11;

// apply scale factor in range

auto applySF = [](double en, double sf) {

return (en>500) ? en*sf : en ;

};

ROOT::RDataFrame df("skim_g2phase","trimmed_gm2ringsim_muon_gasgun.root");

auto rdf = RNode(df);

rdf = rdf.Define( "scale" , [&scale](){return scale;} );

rdf = rdf.Define( "Enprime" , applySF , { "caloHitEdep" , "scale" } );

TH1DModel hmodel = TH1DModel( "hmodel" , "; Energy [MeV]; Events" , 31, 0, 3100);

auto plot = rdf.Histo1D(hmodel, "Enprime");

TFile *fout = TFile::Open( "test.root" , "RECREATE");

plot.GetPtr()->Write();

fout->Close();

return 0;

}

the test root file can be found here:trimmed_gm2ringsim_muon_gasgun.root (242.7 KB)

the compiling command is

COMPILER=$(shell root-config --cxx)

FLAGS=$(shell root-config --cflags --libs) -g -O3 -Wall -Wextra -Wpedantic

INCLUDE=-I $(PLUGIN)

$(COMPILER) $(FLAGS) $(INCLUDE) $< -o bin/$@

Appreciated if you can take a look. Thanks!

Hello again,

I think i made a fool of myself. The dim is expected as the bin content of the 500 - 600 MeV is scaled up, thus there will be significant (~>11%) of event migrates to the next bin 600 - 700 MeV.

It is statistics not ROOT. I will close the topics for now.

SY